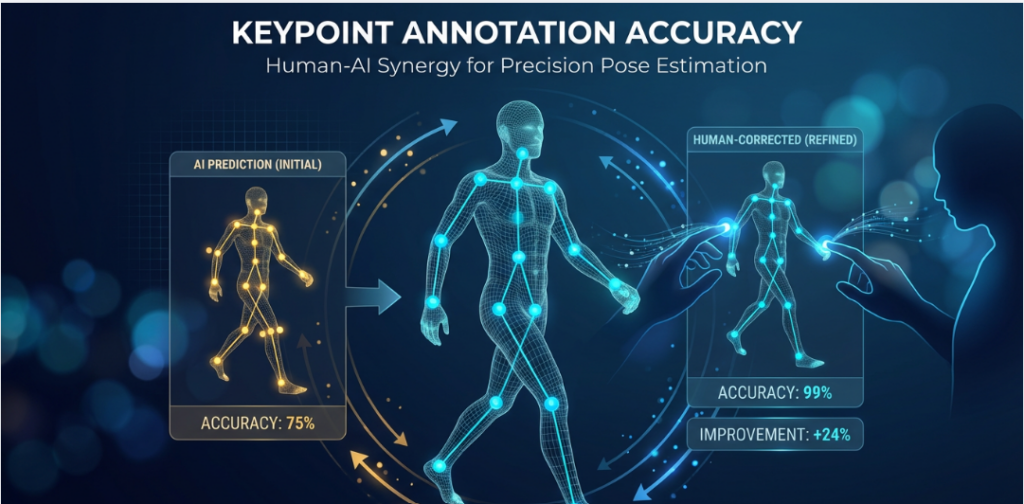

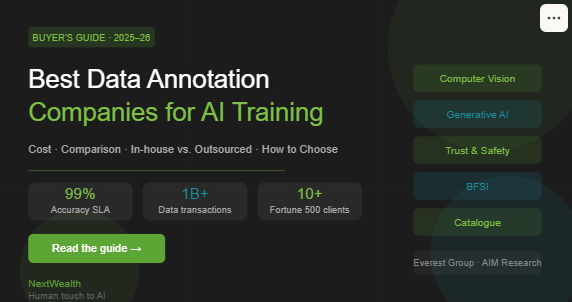

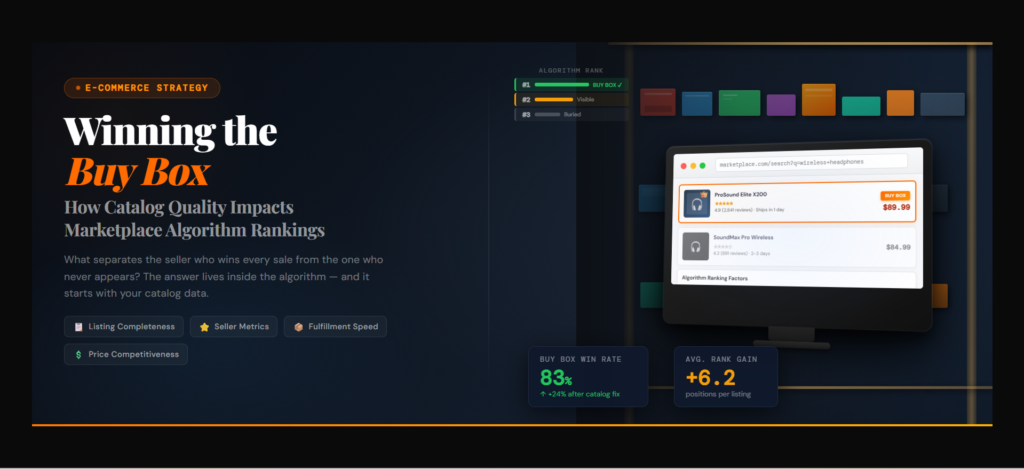

Why Your Marketplace AI Keeps Getting It Wrong — And How Human-in-the-Loop Quality Fixes It

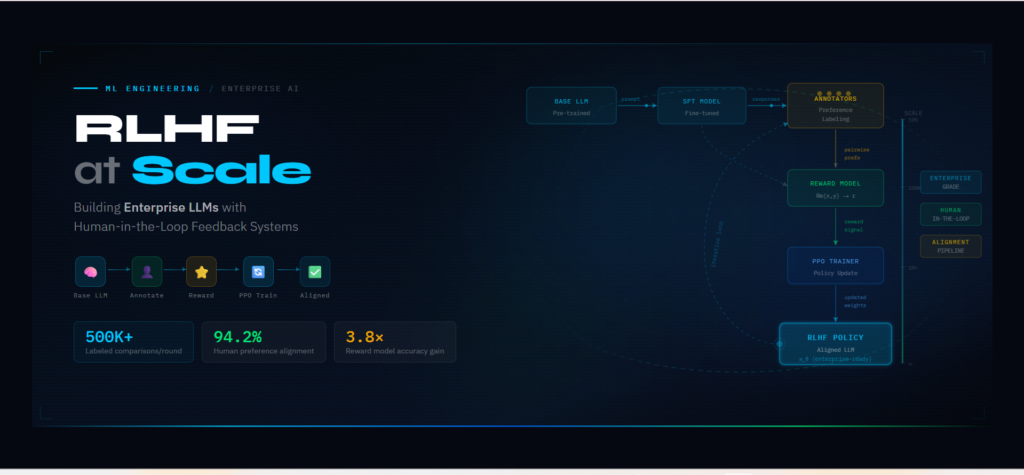

Your AI model is only as reliable as the data that trained it. Most marketplace AI teams know this in principle. Few have built the quality infrastructure to act on it. The result is a pattern that repeats across e-commerce platforms at scale: a model that performs well on benchmarks but degrades in production — […]

Learn More