Author: Sagar Srivastava

-

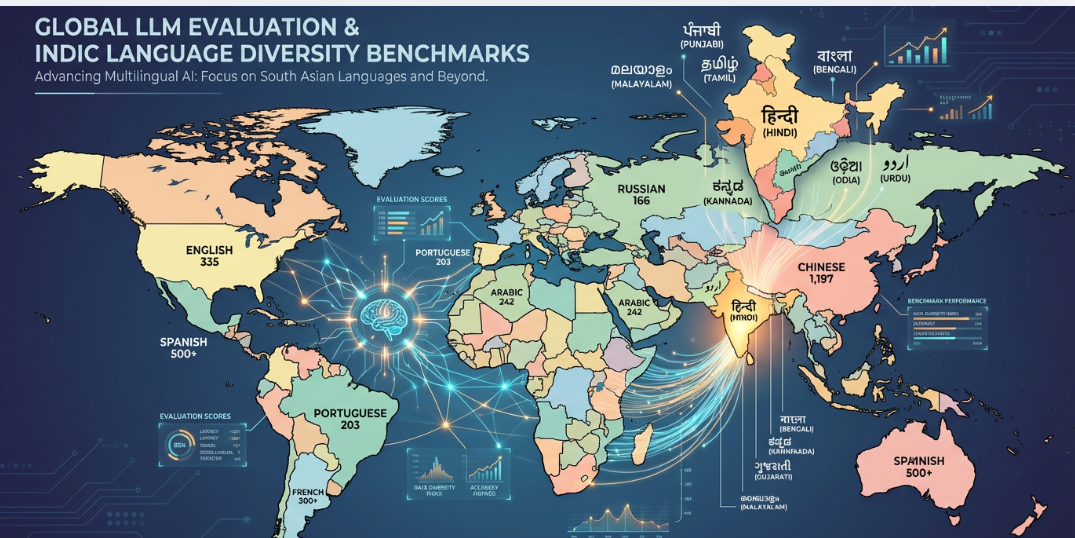

Evaluating Large Language Models: Global Advances and the Need for Indic-Specific Benchmarks

As large language models (LLMs) evolve in scale and capability, evaluating their performance, safety, and applicability has become a critical concern. Globally, research has matured to incorporate multi-dimensional benchmarks addressing robustness, fairness, factuality, and task generalization across domains and modalities. Despite these advances, significant gaps remain in evaluating LLMs for low-resource languages particularly those in…

-

RLHF for Enterprise LLMs: Services, Costs, and How to Choose the Right Partner

Fine-tuning a large language model is hard. Fine-tuning it to behave reliably and consistently, safely, in your domain is harder. RLHF is where most enterprise LLM projects either get serious or get stuck. This article covers who offers RLHF annotation services at enterprise scale, what the work actually costs, and how to evaluate a partner…

-

Conversational AI Voice Models: Metrics and Beyond with NextWealth

Conversational AI voice models are increasingly crucial in bridging human-computer interactions through natural, vocal conversations. These models power virtual assistants, customer support, healthcare bots, and many real-time applications requiring fluid dialogue and empathetic communication. Evaluating and refining voice-based conversational AI involves a rich set of performance metrics that ensure not only linguistic accuracy but also…