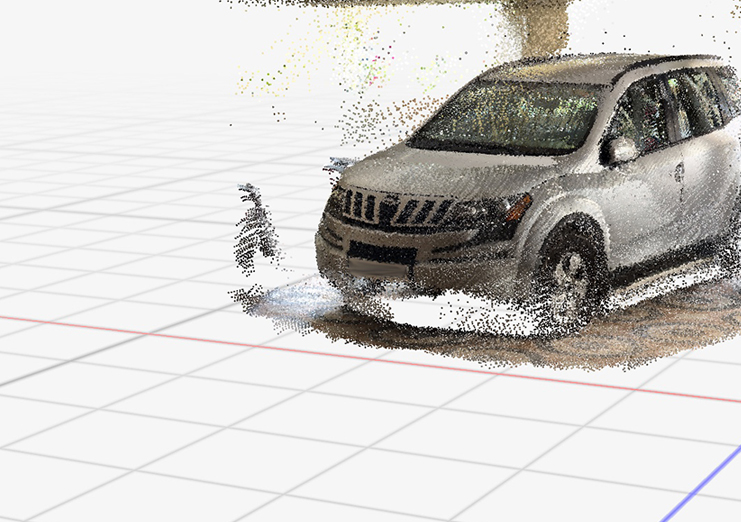

Precision LiDAR & 3D Point Cloud Annotation Services

Empowering autonomous systems with Human-in-the-Loop (HITL) LiDAR annotation for superior depth perception, accurate object detection, and safe navigation.

NextWealth LiDAR Annotation Services

De-risking AI deployment with human-precise LiDAR annotation!!!

At NextWealth, we specialize in high-precision LiDAR data annotation, combining advanced tooling with expert human intelligence. Our teams transform complex LiDAR sensor data into reliable training datasets that power autonomous vehicles, ADAS, robotics, smart cities, surveying, and geospatial applications.

With 4M+ annotated multi-modal frames and 99.3% cross-sensor accuracy, we help enterprises scale AI safely, delivering the spatial intelligence needed for real-world 3D perception.

Core LiDAR Annotation & Labelling Services

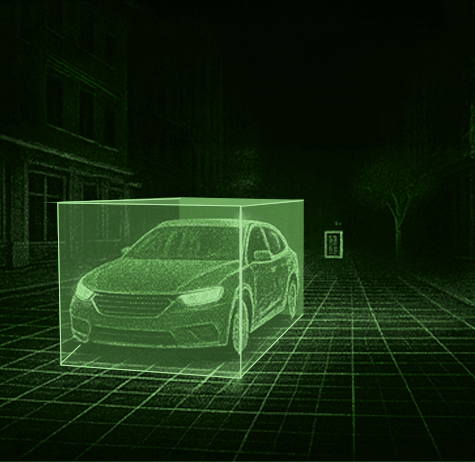

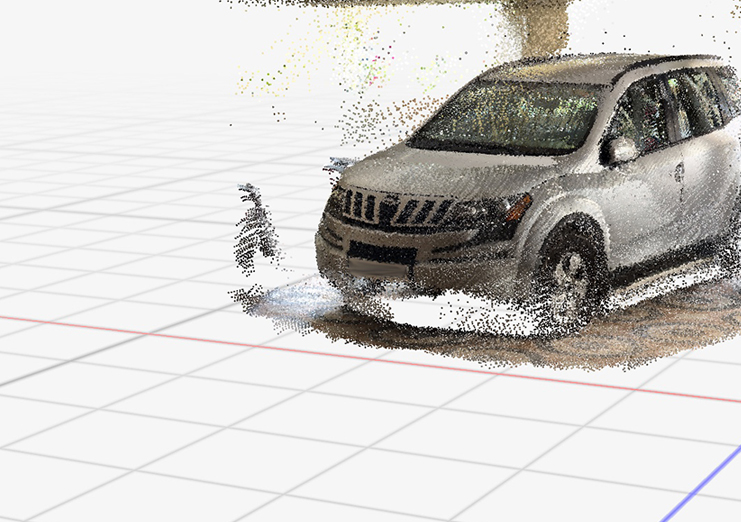

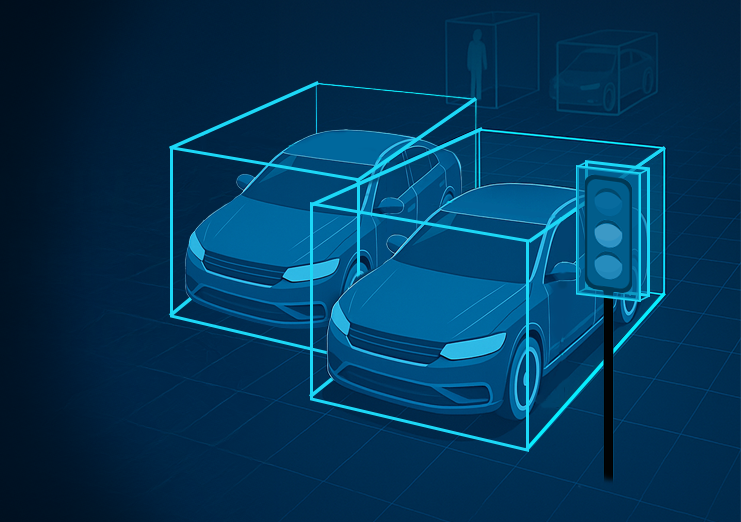

3D Cuboid Annotation

Highly accurate 3D bounding boxes in LiDAR point clouds enable AI to detect, track, and avoid obstacles critical for autonomous driving and industrial safety.

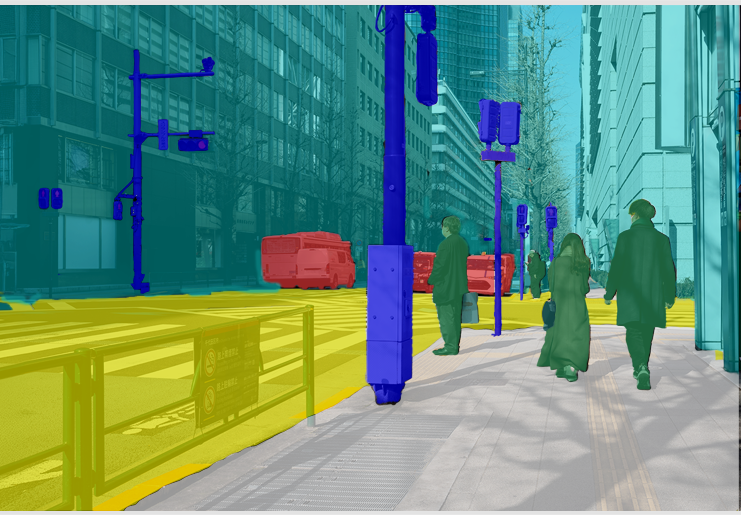

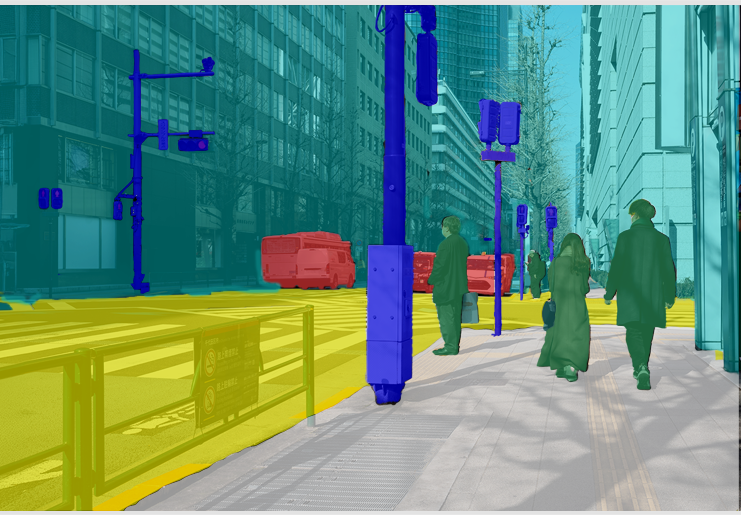

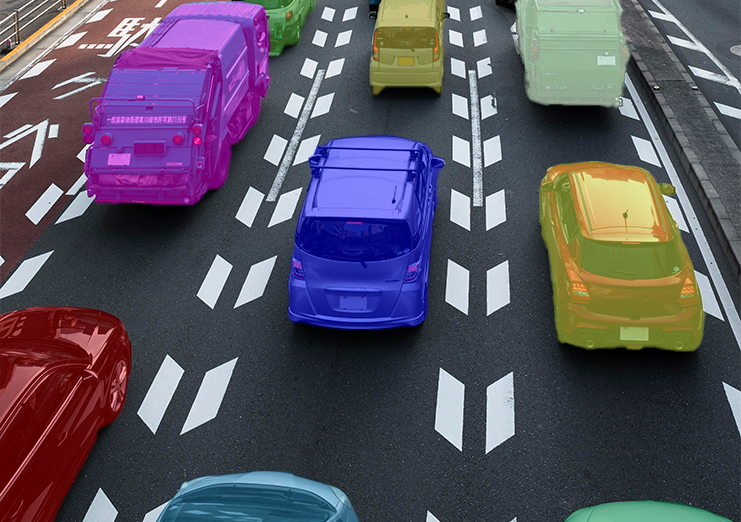

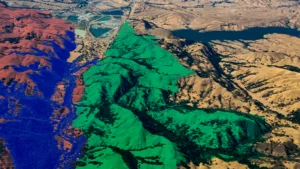

Semantic Segmentation

Each point in the LiDAR dataset is classified (roads, vehicles, vegetation, infrastructure), giving AI models context-rich environmental understanding for navigation, city planning, and environmental monitoring.

Polygon & Polyline Annotation

Precise tracing of lanes, fences, curbs, and irregular structures supports lane-keeping, intersection navigation, and boundary detection in dynamic environments.

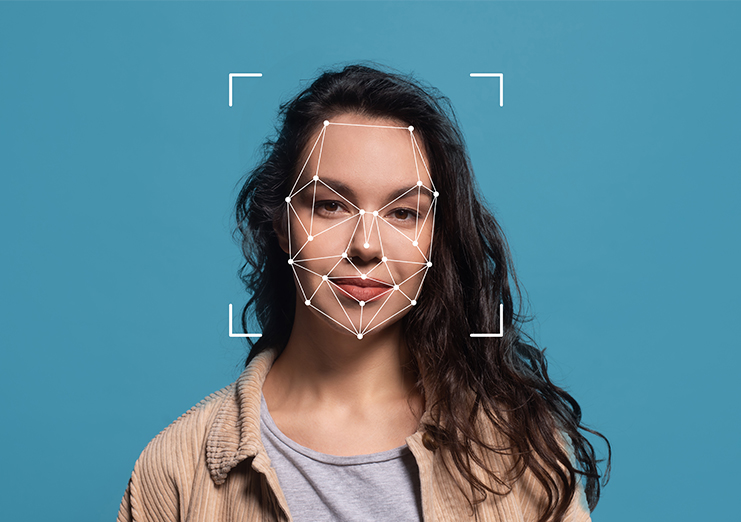

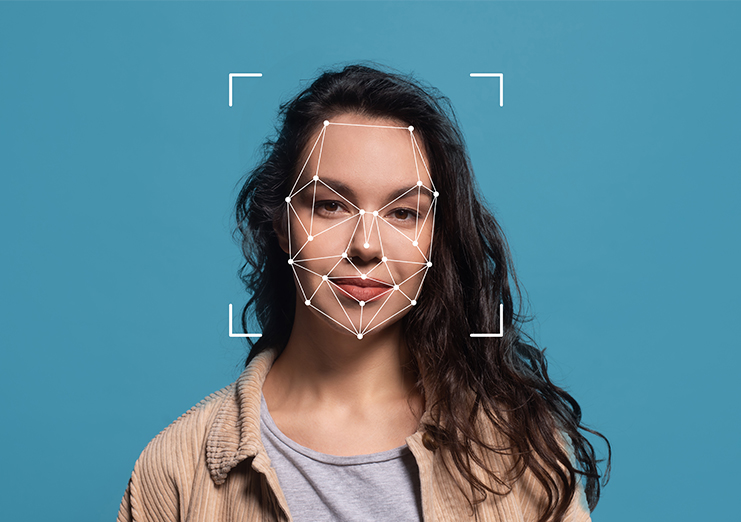

Landmark & Key-point Annotation

Marking structural or anatomical points for shape recognition and feature detection essential for robotics, healthcare imaging, and architectural analysis.

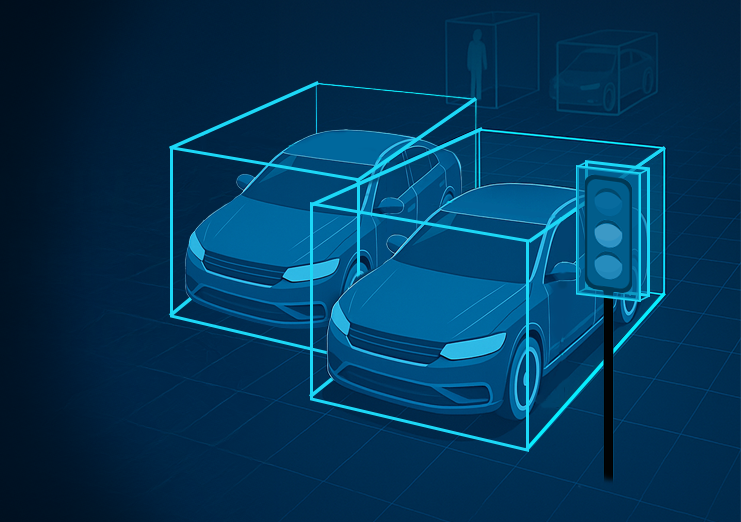

Sensor Fusion Annotation

Aligning LiDAR point clouds with RGB/camera data for richer perception, delivering holistic datasets that strengthen autonomous driving, robotics, and smart infrastructure models.

Core LiDAR Annotation & Labelling Services

3D Cuboid Annotation

Semantic Segmentation

Polygon & Polyline Annotation

Landmark & Key-point Annotation

Sensor Fusion Annotation

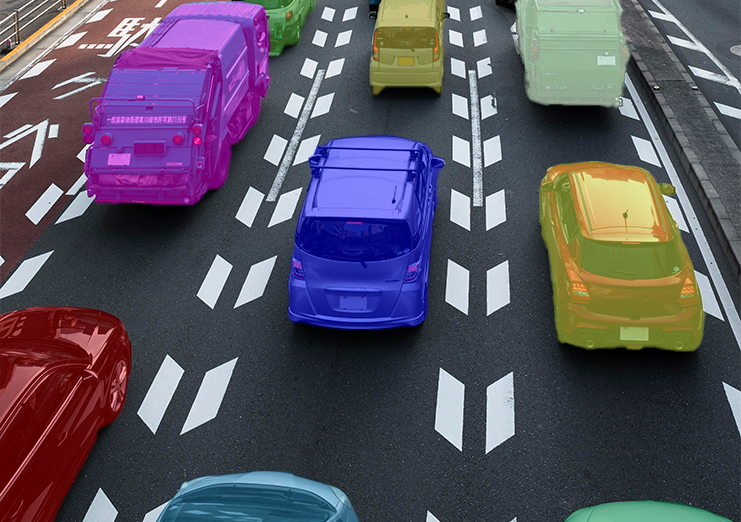

Industry Applications – Proof in Action

Autonomous Vehicles & ADAS

- Delivered 500K+ multi-sensor annotated LiDAR frames for a global AV OEM, enabling safer obstacle detection, pedestrian tracking, and lane recognition.

- Supported Level 4 & 5 pilot programs with temporal consistency across LiDAR + camera fusion.

Smart Cities & Infrastructure

- Generated 3D urban maps for semi-urban German roads to optimize traffic light placement and reduce congestion.

- Partnered with municipal authorities to deliver annotated LiDAR datasets for intelligent infrastructure and urban planning projects.

Robotics & Industrial Automation

- Enabled autonomous warehouse robots to navigate safely by annotating dense LiDAR point clouds with hazard detection markers.

- Helped an industrial automation client cut operational downtime by 30% through precise obstacle recognition.

Geospatial & Surveying

- Processed 2M+ LiDAR frames for a European surveying firm, cutting turnaround time from weeks to days.

- Enabled accurate terrain classification and environmental monitoring at production scale.

Railways & Transportation

- Annotated 80K+ km of railway assets (tracks, poles, signals, overhead wires) using LiDAR + panoramic imagery.

- Improved asset management efficiency by 40%, supporting predictive maintenance and safety compliance.

Agriculture & Forestry

- Supported precision irrigation projects by mapping farmlands and elevation shifts with annotated LiDAR data.

- Enabled crop density monitoring and vegetation analysis for large-scale agri-tech platforms.

Security & Defence

- Assisted defence partners in annotating LiDAR datasets for perimeter surveillance and low-visibility threat detection.

- Delivered annotated terrain awareness maps that improved border security monitoring efficiency.

NextWealth Contribution in Real-World Wins: Global Fleet Safety at Scale

A global fleet management leader partnered with NextWealth to annotate and validate over 1 million hours of telematics video data.

Talk To Our ExpertNextWealth’s Approach to Driver Behaviour Analysis

At NextWealth, we go beyond data collection – we deliver actionable insights that empower safer and smarter driving.

Our unique blend of AI automation + Human-in-the-Loop validation ensures unmatched accuracy.

What clients gain with NextWealth:

- 95%+ accuracy in behaviour detection with HITL validation

- Reduced false alerts → drivers only receive actionable warnings

- Scalable datasets to power fleet monitoring, AV training, and insurance models

- Full compliance with global data security and privacy standards

Simply put: we help you build reliable driver monitoring systems that scale with your business.

Successful client stories and case studies

Deep dive into our journey of partnering with the global business giants.

Why partner with us

Our services are tailored to elevate the efficiency of your AI/ML processes

Managed Services l Captive Services l Staffing Services

5,000+

Skilled

Employees

1B+

Data

Transactions

40+

Live Projects

10+

Fortune 500

Clients

73

NPS Score

Types of Annotation We Do :

Visual Annotation (Driver Monitoring via Camera)

- Head Pose & Gaze Annotation: Labeling where the driver is looking (road, dashboard, phone, mirrors).

- Facial Expression Annotation: Drowsiness, yawning, distraction, anger, stress, smoking, talking.

- Eye State Annotation: Open/closed, blink rate, eye closure duration.

Audio Annotation (If In-cabin Audio is Captured)

- Voice Activity Detection: Speaking, shouting, talking on phone.

- Emotion Annotation (Speech-based): Calm, angry, stressed, distracted.

- External Sounds Annotation: Honking, sirens, sudden loud noises that may affect driver behavior.

Sensor / Telemetry Annotation

- Driving Event Labeling: Hard braking, rapid acceleration, sharp turns, overspeeding.

- Risky Manoeuvre Annotation: Tailgating, lane departure, sudden swerves.

- Distraction & Inattention Events: Identified from steering, brake, or accelerator patterns.

- Fatigue Detection Signals: Micro-corrections in steering, delayed reactions.

Environment & Context Annotation

- Traffic Condition Annotation: Dense traffic, clear road, pedestrian presence.

- Weather/Lighting Condition Annotation: Rain, fog, night/day (to see if behavior changes).

- Road Type Annotation: Highway, city streets, rural roads.

Ready to make every mile safer?

Enhance safety, reduce risks, and transform your transportation business with NextWealth’s Driver Behaviour Analysis services.

Talk To Our ExpertExplore Resources

Know how we are accelerating business growth by enabling effectiveness in AI/ML

The Real Cost of Scaling Autonomous Retail: What Data Operations Actually Look Like

1 min read

Multimodal LLMs in 2026: Annotation Challenges When AI Needs to See, Hear, and Read

1 min read

Latest Update

FAQs

What is LiDAR annotation and why is it important?

LiDAR annotation involves labeling 3D point cloud data captured by LiDAR sensors. Annotators create 3D bounding boxes, semantic segmentation, and object classifications in three-dimensional space. This data trains AI models for autonomous vehicles, robotics, smart cities, and mapping applications that require precise spatial understanding.

How does LiDAR differ from camera-based object detection?

Cameras capture 2D visual information and struggle in poor lighting. LiDAR uses laser pulses to generate precise 3D maps of the surrounding environment and operates effectively in darkness, fog, and other weather conditions. LiDAR provides accurate depth and distance measurements that cameras cannot match, making it essential for autonomous navigation.

What is 3D cuboid annotation in LiDAR data?

3D cuboid annotation creates three-dimensional bounding boxes around objects in point cloud data. Unlike 2D boxes, 3D cuboids define object height, width, length, and orientation in space. This enables AI to infer object size, position, and rotation, which are crucial for autonomous vehicles to navigate safely.

How do you handle sparse or partially occluded LiDAR points in complex environments?

Annotators work with fused RGB–LiDAR views, so even sparse clusters can be interpreted in the context of the image. Guidelines specify minimum point-thresholds, extrapolation rules, and when to flag truly ambiguous objects for expert review rather than forcing low‑confidence labels.

Which industries use LiDAR annotation services?

Autonomous vehicle manufacturers use it for navigation systems. Smart cities employ it for infrastructure planning. Geospatial companies need it for terrain mapping. Agriculture uses it for crop monitoring. Robotics requires it for warehouse automation. Railways apply it for asset management. Security and defense use it for surveillance.

How does Human‑in‑the‑Loop interact with automation in your LiDAR pipelines?

Automation handles pre‑labeling and interpolation, while HITL teams focus on complex scenes, rare classes, and model-output QC. This combination lets clients scale to millions of frames without sacrificing precision in safety‑critical or high‑value regions.

How does sensor fusion annotation improve AI performance?

Sensor fusion combines LiDAR point clouds with camera RGB data, creating datasets that leverage both 3D spatial accuracy and visual appearance. Annotations are aligned across both modalities, providing AI models with richer context. This improves object classification, especially for distinguishing similar objects or handling partial occlusions.

What is the difference between 2D and 3D point cloud annotation?

2D annotation works on flat images, while 3D point cloud annotation labels objects in three-dimensional space. 3D annotation requires specialized tools and training to navigate point clouds, understand depth relationships, and create accurate spatial boundaries. It’s more complex but provides essential depth information.

Why Choose NextWealth?

Quality Assurance

Scalability

Data Security